This year Apple changed the game of the desktop CPUs with their announcement of the Apple Silicon.

A similar thing happened a year ago in the world of cloud computing.

AWS released a new type of instance backed by their custom-built ARM processors called AWS Graviton2.

They're supposed to have up to 40% better price-performance than their x86 counterparts.

Another huge recent update is the introduction of Graviton2-based Amazon RDS instances.

Let's run a couple of benchmarks and load-test a real-world backend application to see how good ARM servers are and how easy they are to use.

Performance#

I compared a t4g.small (ARM) instance to a t3.small (x86) EC2 instance.

Currently, the on-demand hourly cost in the us-east-1 region for t3.small (x86) is $0.0208 and t4g.small (ARM) is $0.0168.

The ARM-backed instance is already around 20% cheaper.

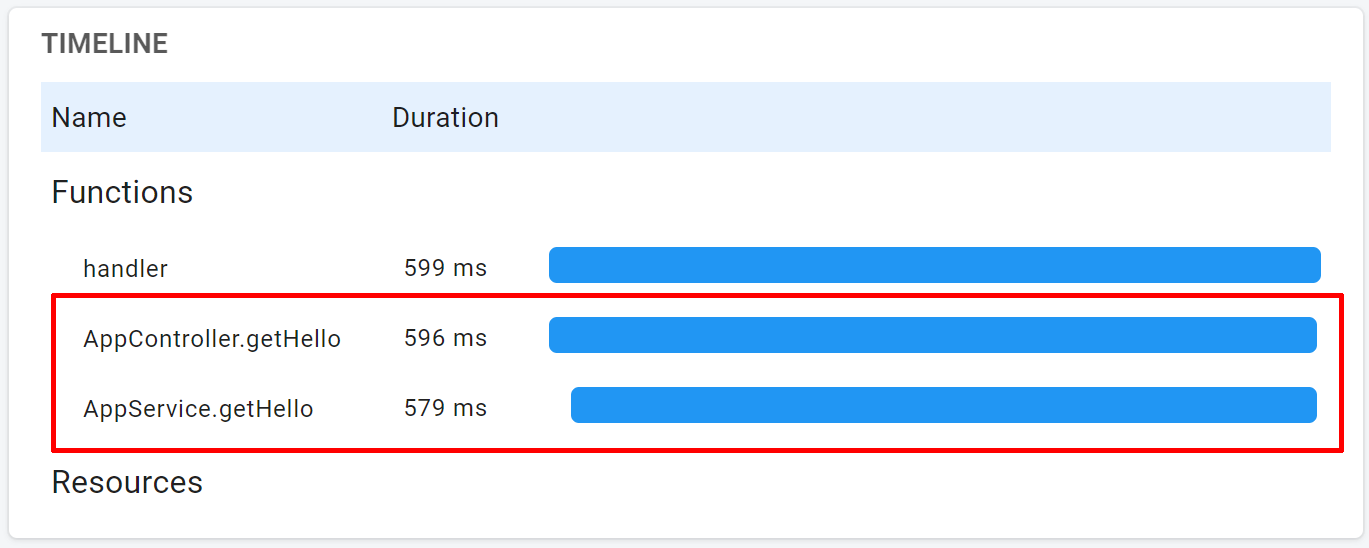

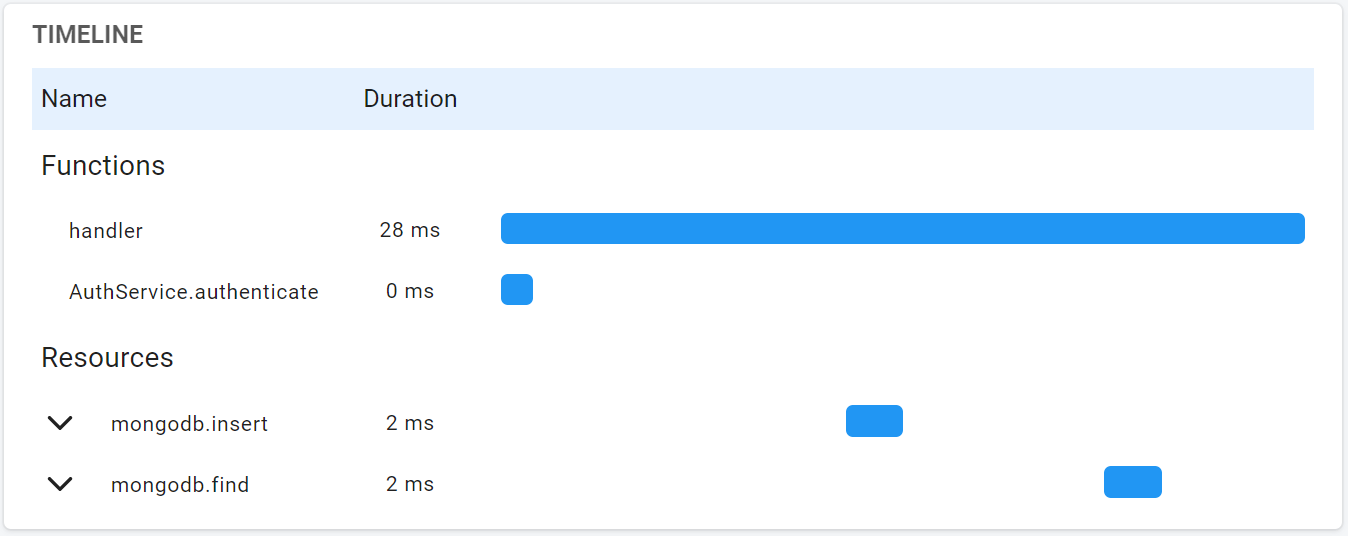

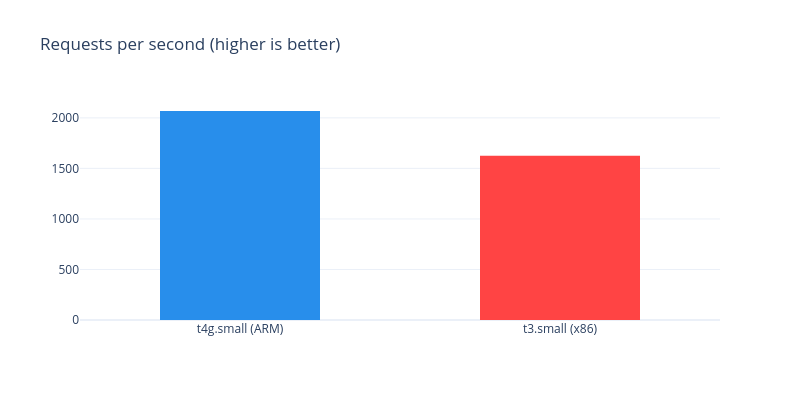

First, I ran a load-test on a fresh recap.dev setup with wrk.

It's a docker-compose template running 4 processes.

A handler process puts every request into a RabbitMQ.

A separate background process inserts traces in batches of 1000 into a PostgreSQL database.

I ran wrk on a t3.2xlarge instance in the same region using the following command:

It bombarded the target instance with trace requests for 5 minutes using 24 threads and 1000 HTTP connections.

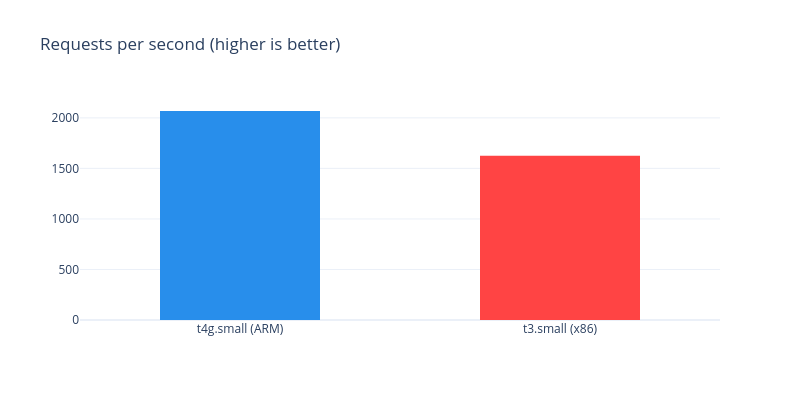

This is the result I got for t4g.small (ARM) instance:

For the t3.small (x86) instance:

ARM-backed instance served 27% more requests per second 26% faster (on average).

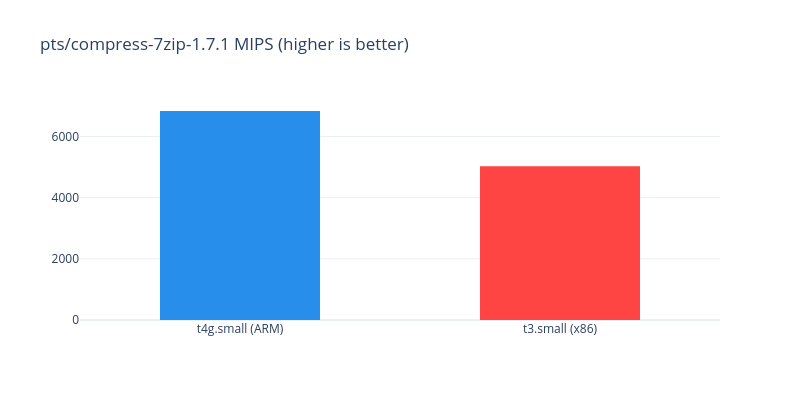

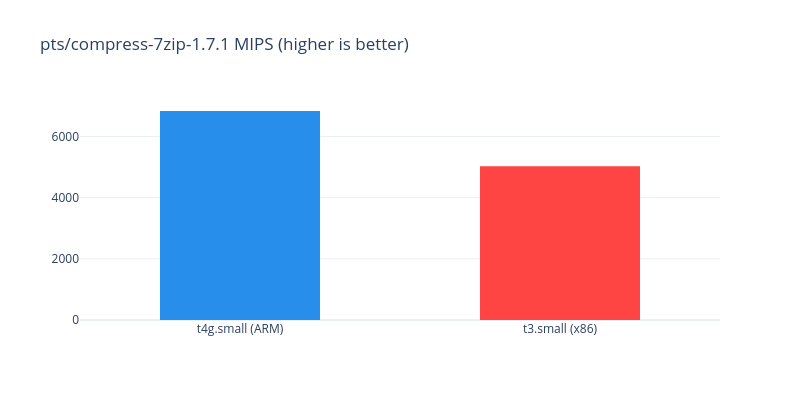

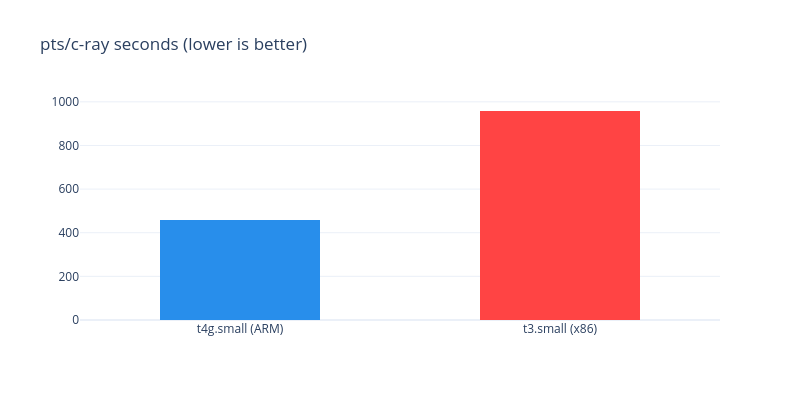

Then I ran a couple of benchmarks from the Phoronix Test Suite.

pts/compress-7zip-1.7.1 gave 6833 MIPS on t4g.small (ARM) versus 5029 MIPS on t3.small (x86). A 35% higher result on an ARM processor.

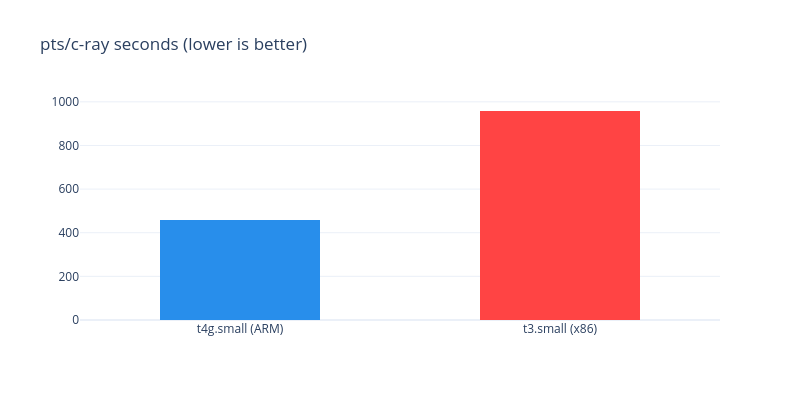

ARM-backed server finished the pts/c-ray benchmark more than 2 times faster on average. 958 seconds for x86 versus just 458 for ARM.

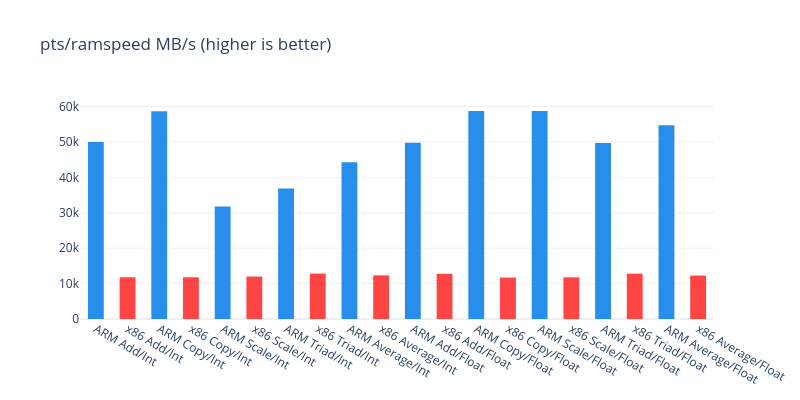

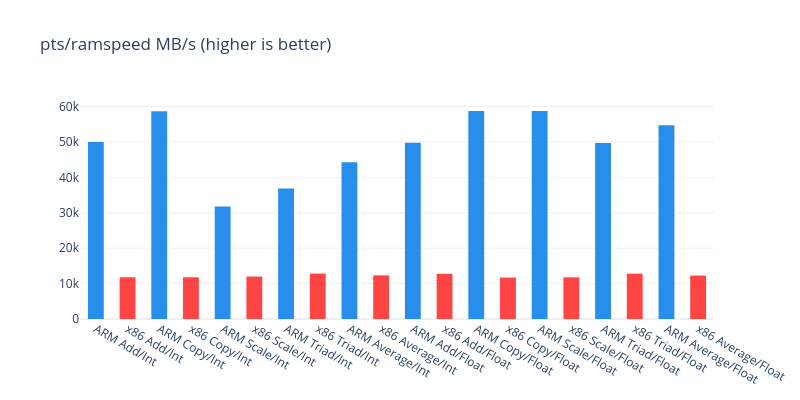

I also ran a bunch of RAM speed tests from pts/ramspeed that measure memory throughput on different operations.

| Benchmark Type | t4g.small (ARM) | t3.small (x86) |

|---|

| Add/Integer | 50000 MB/s | 13008 MB/s |

| Copy/Integer | 58650 MB/s | 11772 MB/s |

| Scale/Integer | 31753 MB/s | 11989 MB/s |

| Triad/Integer | 36869 MB/s | 12818 MB/s |

| Average/Integer | 44280 MB/s | 12314 MB/s |

| Add/Floating Point | 49775 MB/s | 12750 MB/s |

| Copy/Floating Point | 58749 MB/s | 11694 MB/s |

| Scale/Floating Point | 58721 MB/s | 11765 MB/s |

| Triad/Floating Point | 49667 MB/s | 12809 MB/s |

| Average/Floating Point | 54716 MB/s | 12260 MB/s |

In short, the memory on the t4g.small equipped with a Graviton2 processor was 3 to 5 times faster.

Just looking at the performance and the instance price the conclusion is that the switch to the ARM-based instances is a no-brainer. You get more power for less money.

Compatibility#

The big question when switching processor architectures is compatibility.

I found that a lot of things were already recompiled for the ARM processors.

Mainly, Docker was available as .rpm and .deb and so were most of the images (yes, they need to be built for different architectures).

Docker-compose, however, wasn't. Which was a huge bummer for me.

I had to jump through some hoops building several dependencies from source code to make it work.

The situation will hopefully improve in the future as the ARM adoption on the servers grows,

but right now you might pay more in working hours than you save by migrating.

The RDS (AWS managed RDBMS service) on Graviton2 is where I think the real win-win is.

You don't have to do any setup and get all the benefits of an ARM processor on your server.

We also made sure recap.dev is easy to run on ARM processors and introduced multi-arch docker images and made pre-built ARM AMIs available on AWS.